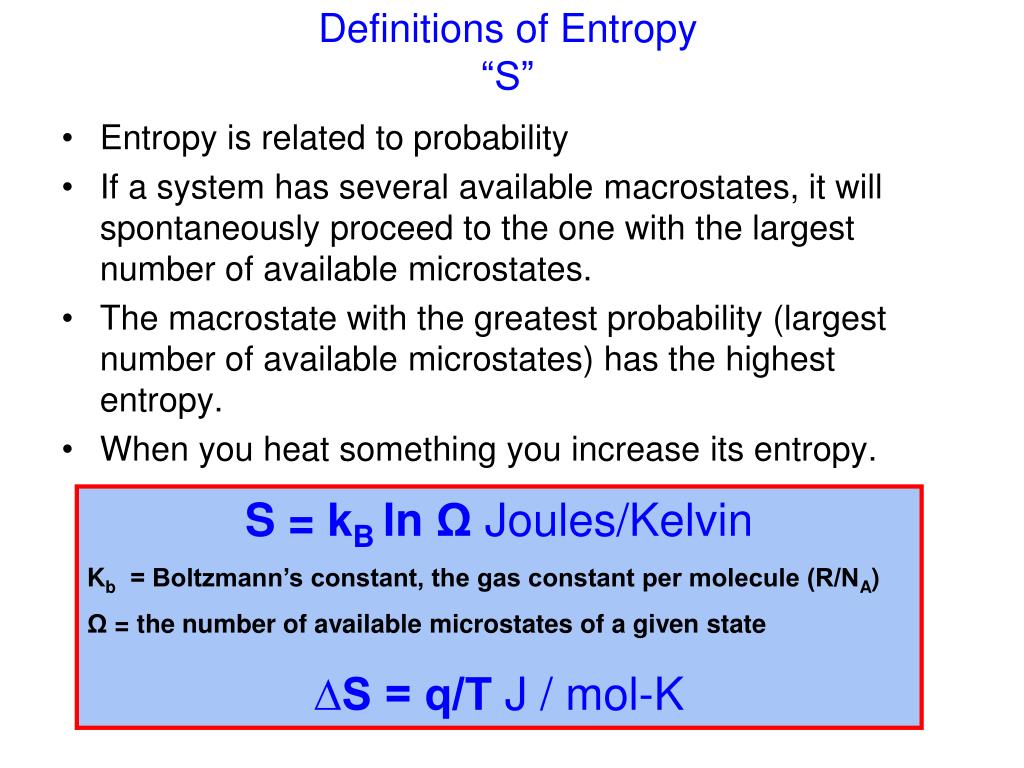

By “different” we mean that the definitions do not follow from each other, specifically, neither Boltzmann’s, nor the one based on Shannon’s measure of information (SMI), can be “derived” from Clausius’s definition.īy “equivalent” we mean that, for any process for which we can calculate the change in entropy, we obtain the same results by using the three definitions. In this Section we briefly present three different, but equivalent definitions of entropy. Entropy changes are meaningful only for well-defined thermodynamic processes in systems for which the entropy is defined. Therefore, one cannot claim that it increases or decreases. In this article, we show that statements like “ entropy always increases” are meaningless entropy, in itself does not have a numerical value. The statement “ entropy always increases,” implicitly means that “ entropy always increases with time”. The explicit association of entropy with time is due to Eddington, which we shall discuss in the next section. The origin of this association of “Time’s Arrow” with entropy can be traced to Clausius’ famous statement of the Second Law : “ The entropy of the universe always increases”.

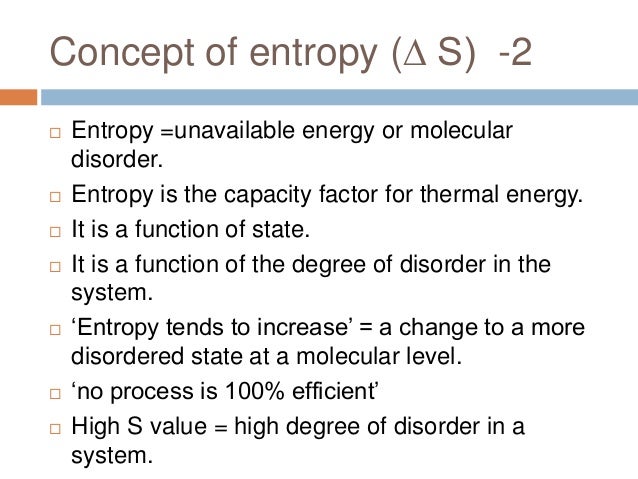

Open any book which deals with a “theory of time,” “time’s beginning,” and “time’s ending,” and you are likely to find the association of entropy and the Second Law of Thermodynamics with time. Introduction: Three Different but Equivalent Definitions of Entropy There might be decreases in freedom in the rest of the universe, but the sum of the increase and decrease must result in a net increase.1. The freedom in that part of the universe may increase with no change in the freedom of the rest of the universe. Statistical Entropy - Mass, Energy, and Freedom The energy or the mass of a part of the universe may increase or decrease, but only if there is a corresponding decrease or increase somewhere else in the universe.Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other thermodynamic quantities. Statistical Entropy Entropy is a state function that is often erroneously referred to as the 'state of disorder' of a system.Phase Change, gas expansions, dilution, colligative properties and osmosis.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed